Sora: OpenAI's Text-to-Video Model Impact on Filmmakers

Core Concepts

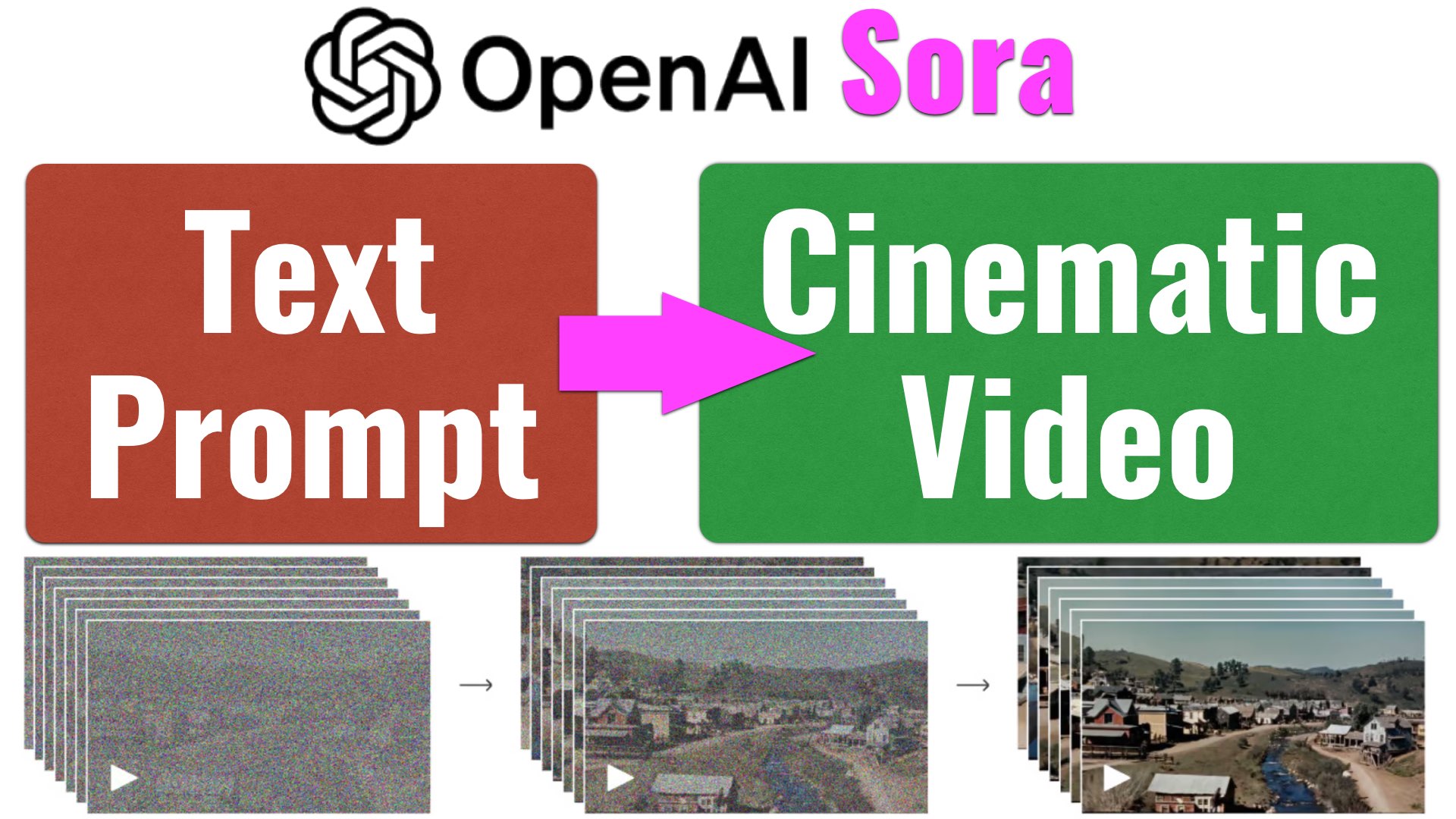

OpenAI introduces Sora, a text-to-video model that raises concerns and opportunities for filmmakers.

Abstract

OpenAI has unveiled Sora, a text-to-video model that can generate cinematic videos from short text prompts. While it offers new creative possibilities, concerns arise about its impact on filmmakers' jobs and the authenticity of AI-generated content. Sora's capabilities include creating complex scenes with multiple characters and specific motions, extending videos, editing styles/environments, and more. However, weaknesses in accurately simulating physics and cause-effect instances exist but are expected to improve over time. The safety measures taken by OpenAI aim to address potential risks associated with misleading content generated by Sora. Despite the advancements in AI technology, questions remain about the future implications for filmmakers and the authenticity of video content.

Sora (text-to-video model) Announced: Game Over for Filmmakers? - Y.M.Cinema Magazine

Stats

"Sora can generate videos up to a minute long while maintaining visual quality and adherence to the user’s prompt."

"Sora can also create multiple shots within a single generated video that accurately persist characters and visual style."

"The current model may struggle with accurately simulating the physics of a complex scene."

"Users will not believe videos anymore due to potential misleading content generated by Sora."

Quotes

"I work as a stop motion animator... I’m intrigued, but also terrified." - OpenAI Forum User

"We’ll be engaging policymakers, educators, and artists around the world to understand their concerns." - OpenAI Representative

"Is it a game over for filmmakers?" - Closing Thoughts Question

Key Insights Distilled From

by Yossy Mendel... at ymcinema.com 02-18-2024

https://ymcinema.com/2024/02/18/sora-text-to-video-model-announced-game-over-for-filmmakers/

Deeper Inquiries

How might AI-generated content like Sora impact traditional filmmaking practices?

AI-generated content like Sora has the potential to revolutionize traditional filmmaking practices in several ways. Firstly, it can significantly reduce production costs by eliminating the need for expensive equipment, sets, and crew members. This could democratize the filmmaking process, allowing more individuals with creative ideas but limited resources to bring their visions to life. Additionally, AI-generated content can speed up the production process since videos can be created quickly from text prompts without the need for extensive planning and shooting schedules. However, this rapid generation of content may also lead to oversaturation in the market and a decrease in quality as quantity takes precedence over creativity.

What ethical considerations should be addressed regarding the use of AI in creative industries?

The use of AI in creative industries raises various ethical considerations that must be addressed. One primary concern is intellectual property rights and copyright issues surrounding AI-generated content. Who owns the rights to videos created by Sora - the user who inputs the text prompt or OpenAI as the developer? Additionally, there are concerns about job displacement within creative professions such as filmmakers, animators, and CGI artists if AI technologies like Sora become widespread. It's crucial to consider how these advancements will impact employment opportunities and whether safeguards or retraining programs should be implemented to support those affected by automation.

Furthermore, there are ethical implications related to misinformation and deepfakes that arise from AI-generated content creation tools like Sora. The ability to manipulate visuals convincingly through AI poses risks for spreading false information or creating harmful narratives without accountability. Therefore, transparency measures must be put in place so viewers can distinguish between human-created content and AI-generated material.

How can creators adapt to technological advancements like Sora while preserving artistic integrity?

Creators can adapt to technological advancements like Sora while preserving their artistic integrity by embracing collaboration with AI rather than viewing it as a threat. By understanding how tools like Sora work and incorporating them into their workflow strategically, creators can enhance their storytelling capabilities and explore new avenues for creativity.

One approach is using AI as a tool for ideation or inspiration rather than solely relying on it for execution. Creators can leverage Sora's ability to generate visual concepts quickly based on text prompts but then infuse these concepts with their unique style, emotions, and narrative depth during post-production processes.

Moreover, maintaining transparency with audiences about when AI technology was used in creating certain elements of a film or video helps uphold artistic integrity while fostering trust with viewers. By being open about where human creativity intersects with machine assistance, creators can showcase innovation while staying true to their vision.

Ultimately,

creators should view technological advancements not as replacements for their skills but as enhancers that offer new possibilities

for storytelling.

0